Wright Standards

INDEPENDENT REVIEW FOR AI AND AUTOMATED WORKFLOWS BEFORE MISTAKES REACH CUSTOMERS

Wright Standards helps teams catch workflow failures, inconsistent outputs, and data integrity issues before they become customer, operational, or reporting problems.

Why this matters

When AI or workflow logic fails, the damage often starts quietly. A small issue can turn into a customer mistake, reporting error, or leadership blind spot. Wright Standards gives teams an independent review before those problems spread.• Independent review beyond internal QA

• Severity-ranked findings with a clear action roadmap

• Reporting that teams and leadership can use

Built on experience in QA, compliance, audit readiness, and workflow review across high-volume and regulated environments.

Illustrative outcomes

Illustrative review outcomes may include launch-pause recommendations, escalation-control gaps, reporting-risk identification, and re-review readiness.

Services

Wright Standards provides independent review for AI-enabled systems and automated workflows where errors could create customer, operational, reporting, or reputational risk.

Independent Workflow Review

Review of workflow behavior, outputs, exception handling, and escalation logic to identify failures that may not be obvious during internal testing.

Data Migration Review

Review of mappings, transformations, record handling, and linkage logic to identify integrity issues before migration errors affect operations or reporting.

Workflow Integrity Assessment

Targeted review of a defined workflow or use case to assess whether outputs are stable, defensible, and fit for real-world use.

Pilot Review

A short, structured engagement designed to evaluate a specific workflow, identify meaningful issues, rank severity, and provide prioritized next steps.

Wright Standards reviews workflows. It does not build or implement them.

Many engagements begin with a scoped Pilot Review before broader review work is considered.

Review Options

Reviews are scoped by workflow risk, complexity, and where an independent second look will create the most value.

Recommended starting point for most first-time engagements

Pilot Review

Best for one workflow, a limited-scope launch, or a newly deployed use case that needs a fast, structured second look before broader rollout.Typical timeline

Usually 1–2 weeks, depending on workflow access, materials provided, and review scope.Most common starting point for

Teams with one workflow, one launch decision, or one defined concern they want independently reviewed quickly.Typical scope

• Review of 1–2 workflows

• Targeted testing at key control points

• Identification of likely failure modes and severityTypical deliverables

• Findings summary

• Risk rating

• Prioritized next-step roadmap

Structured Review

Best for production workflows or higher-risk systems that need a more formal independent review.Typical timeline

Usually 2–4 weeks, depending on system boundary, workflow complexity, and review depth.Most common starting point for

Teams with a live production workflow, multiple decision points, or higher-consequence exposure.Typical scope

• Review across a defined system boundary

• Assessment of workflow logic, outputs, and exception handling

• Review of data handling, traceability, and escalation pointsTypical deliverables

• Integrity assessment report

• Defect and severity matrix

• Corrective action roadmap

• Executive-ready findings summary

Ongoing Oversight

Best for organizations that need periodic re-review after launch, during phased rollout, or as workflows change over time.Typical timeline

Usually 2–4 weeks, depending on system boundary, workflow complexity, and review depth.Most common starting point for

Teams with a live production workflow, multiple decision points, or higher-consequence exposure.Typical scope

• Periodic re-review of workflows and findings

• Follow-up assessment of known risk areas

• Ongoing visibility into stability and escalation concernsTypical deliverables

• Integrity summary updates

• Findings log updates

• Follow-up recommendations

Each engagement is custom-scoped to the level of risk involved.

HOW THE REVIEW WORKS

Wright Standards uses a structured review process to define scope, test behavior, rank issues by consequence, and document priorities.

1. Define scopeIdentify the workflow, outputs, decision points, dependencies, and risk areas.2. Review logic and outputsAssess workflow behavior, exception handling, transformations, escalation points, and output consistency.3. Rank and reportClassify findings by business consequence and document practical next steps for the team.

The process is designed to be structured and defensible without becoming unnecessarily heavy.

WHO THIS IS FOR

Wright Standards is best suited for organizations using AI or automation in ways that could create customer, operational, or reporting risk if outputs are wrong.

Strong fit for:

• companies using AI in customer support, routing, classification, or internal decision workflows

• teams migrating or transforming data between systems

• organizations deploying automated workflows with customer-facing or operational impact

• teams preparing for launch and wanting an independent second look

• organizations that have experienced workflow failures, inconsistent outputs, or confidence issues after deployment

• leadership teams that need a clearer picture of workflow risk beyond internal testing alone

Not the right fit for:

• teams looking for a vendor to build or implement their AI system

• organizations seeking general marketing automation help

• very early experiments that are not yet tied to meaningful operational or customer impact

Best fit is where a quiet failure could become an expensive one.

Examples of what we catch

Independent review matters most when a problem can survive internal testing and surface later in real-world use.

Examples include:

• unstable outputs across similar inputs

• broken escalation logic or missed exception paths

• incorrect categorization, routing, or workflow branching

• data mapping and linkage issues after migration or transformation

• outputs that appear valid but are operationally wrong

• edge cases internal teams did not test deeply enough

• small repeated errors that create larger risk at scale

Many issues do not look serious in isolation. The real risk is often repetition, accumulation, or hidden exposure. That is why findings are ranked by consequence, not just technical imperfection.

Deliverables

Each review is scoped to the workflow and level of risk involved, but the goal is always the same: clear outputs that help teams decide what to address first.

Typical outputs include:

• a findings summary in plain English

• a defect log showing identified issues

• a severity matrix to help prioritize risk

• a corrective action roadmap with recommended next steps

• an executive-ready summary for leadership or stakeholders

• optional follow-up review after changes are made

So teams can:

• understand what was found

• separate critical issues from lower-priority issues

• communicate risk more clearly internally

• decide what to remediate before launch, scale, or wider exposure

Wright Standards identifies and documents issues. Your team decides what to change and when.

CASE EXAMPLES

These anonymized examples show the types of workflows Wright Standards may review, the issues identified, and the kinds of recommendations provided.

Example 1 – AI Hiring Workflow

The challenge

A fast-growing HR software company used an AI assistant to screen and rank candidates. Leadership wanted efficiency, but needed to understand whether inconsistent classifications or weak explanation logic could create hiring risk.

The review

Wright Standards reviewed a defined hiring workflow, analyzed anonymized outputs, tested paired candidate comparisons, and ranked findings by severity based on business consequence.

What was found

The review found high-confidence classifications without clear explanation, plus measurable inconsistency across similarly qualified candidates. Overall risk was classified as ORANGE, meaning escalation was recommended.

What changed

Recommended actions included explanation requirements tied to job criteria, uncertainty language for borderline cases, paired consistency testing, audit logging, and escalation triggers for high-severity events.

Why it matters

The goal of the review was not to rebuild the system. It was to identify risk before the workflow created larger fairness, reputational, or governance problems.

Business impact

This review gave leadership a clearer basis for whether to pause, adjust, or more tightly govern rollout before hiring-risk issues became more visible.

Example 2 – Data Migration Workflow

The challenge

A company preparing for a system migration needed confidence that records, mappings, and transformed fields would remain accurate before the new workflow affected reporting and operations.

The review

Wright Standards reviewed a defined migration workflow, examined field mappings, checked transformation logic, and looked for mismatches, missing linkages, and reporting-impacting errors.

What was found

The review found inconsistent mapping treatment across key fields, missing linkage logic in certain record paths, and several transformation errors that could have produced inaccurate reporting after deployment.

What changed

Recommended actions included mapping corrections, validation checkpoints for transformed fields, expanded exception testing, and a clearer escalation path for reporting-sensitive discrepancies.

Why it matters

The goal of the review was to catch quiet data integrity issues before they became operational problems, reporting errors, or time-consuming remediation work after launch.

Business impact

This review helped surface reporting and operational risk before migration errors created downstream cleanup work after deployment.

These examples are anonymized and included to illustrate review structure, findings style, and deliverables.

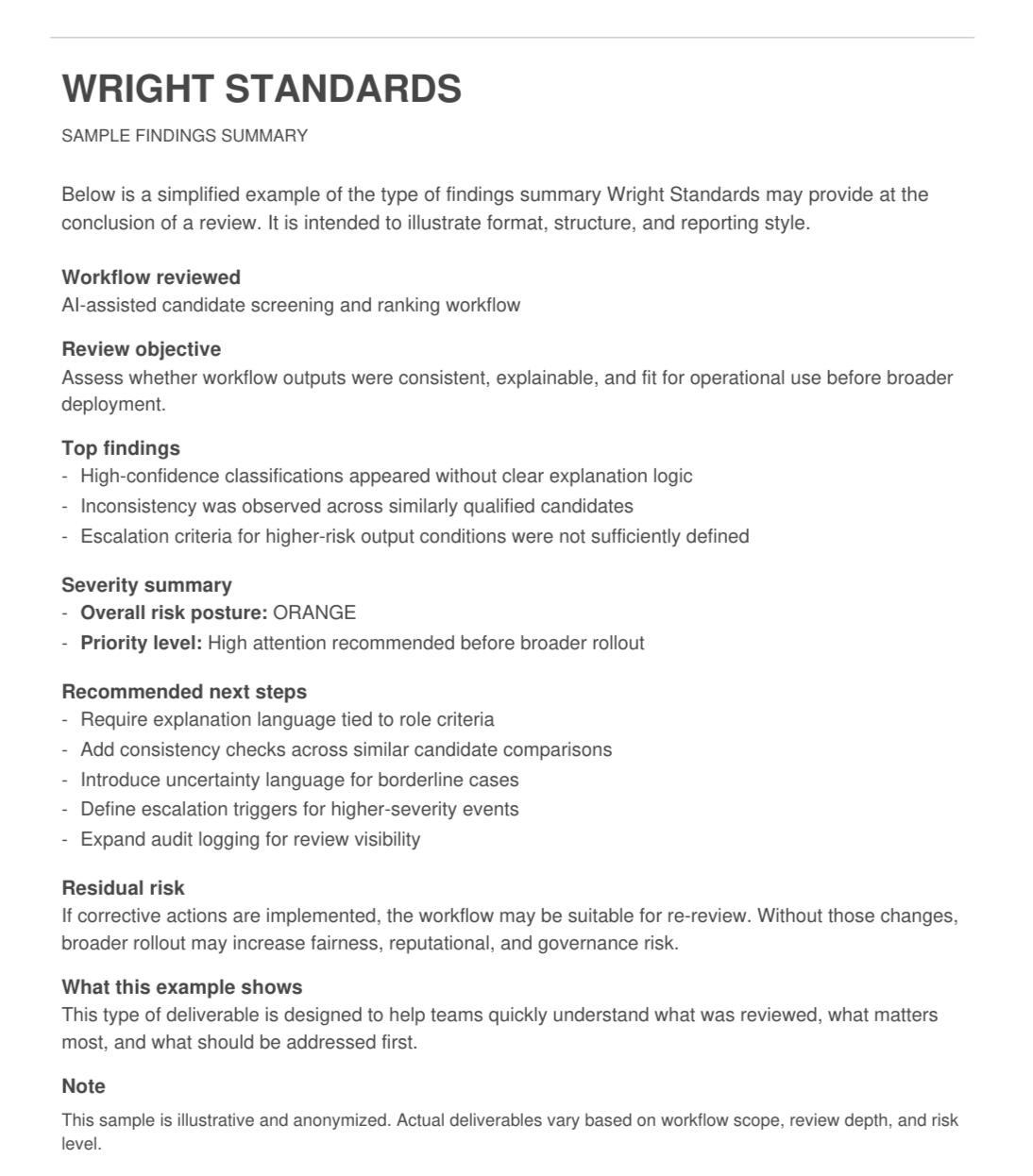

SAMPLE FINDINGS SUMMARY

Below is a simplified example of the type of findings summary Wright Standards may provide at the end of a review.

Preview the sample below, or download the full version.

About / Founder

Wright Standards is an independent review firm for AI-enabled systems and automated workflows where output quality, workflow integrity, and business consequence matter.Teams are often too close to the build to see every meaningful failure before launch, scale, or wider exposure. Wright Standards reviews workflows already in use, or preparing for use, identifies meaningful issues, ranks severity, and helps leadership understand where risk is concentrated.

Founder

Ashly Wright founded Wright Standards to provide structured, independent review of workflows where hidden failures, weak controls, or unstable outputs can create real business consequences.

Background

Her background includes quality assurance, compliance review, process improvement, and workflow documentation in regulated and high-volume environments, including work supporting large-scale enterprise and nonprofit operating environments.

Review Focus

That background includes documentation review, output assessment, trend reporting, corrective action support, SOP improvement, and quality control disciplines that align closely with independent workflow review.

Credentials

Relevant credentials and training include CAPM, CQIA, CQPA, and Lean Six Sigma training, alongside experience in quality assurance, documentation review, audit readiness, and workflow quality control.

Framework Alignment

Our review approach can support organizations operating with governance expectations informed by the NIST AI Risk Management Framework and ISO/IEC 42001.

Why Clients Engage Wright Standards

• Focused on real business consequences, not just technical defects

• Structured findings that leadership can understand and use

Confidentiality

Review engagements may involve sensitive workflows, internal logic, or operationally important processes. Wright Standards approaches engagements with defined scope boundaries, limited-access handling, and confidentiality in mind. Sensitive materials are reviewed only to the extent needed for the engagement.

Early conversations are focused on fit and scope. No technical materials are needed for an initial inquiry.

Contact

Tell us about the workflow, system, or review need. Wright Standards reviews AI-enabled systems and automated workflows where hidden failures, output instability, or integrity issues could create meaningful business risk.

What happens next

Initial inquiries are reviewed for fit, likely scope, and whether a pilot review, structured review, or exploratory scoping call makes sense. Most inquiries are reviewed within 1–2 business days. Initial conversations are exploratory and focused on fit, scope, and the right review level.Best fit for

Best fit: customer-facing, operational, or reporting-sensitive workflows where an independent second look would help before launch, scale, or wider exposure.

Helpful details: workflow type, current stage, and the main concern you want reviewed.

Thank you

Thanks — your inquiry has been received. Wright Standards will review it and follow up on fit, scope, and next steps.